2. Emergentism and Reductionism

Answering "What is there in this world?", requires in the first instance providing us with some criteria for picking out real “entities”. Customarily such reasonable criteria start out by providing some statement whose veracity is admitted not to be open to question, and then entities are tagged as real if they concur with such criteria, or can be derived from them via legitimate inferences. Finding precise formulations for criteria like this has turned out to be a difficult task, though.

Alternatively, one can opt for criteria exhibiting relative reliability, closer to the kind used by working scientists in deciding whether their results are trustworthy. Among them, the one referred to as robustness,[2] is laudable for its versatility. It reads as: Entities are robust if they are accessible (detectable, measurable, derivable, definable, producible, and the like) in a variety of independent ways. It is clear that robustness applies to both, “objects” and the “structural features” stemming from the specific ways these objects arrange themselves as a consequence of their mutual interactions. Consequently, robustness can equally be applied to material stuff, its properties, its internal connectivity, and can also be utilized for the demarcation of parts and the whole of the systems under consideration. Furthermore, the constraints imposed by the fact that the means of accession must be many, diverse and independent implies that the concept of accessibility is not restricted to “experimental manipulation”, but it should also be extended over the realm of logical or mathematical derivation, and to the many spots in between.

The above outlined approach sets on firm grounds the analysis of the so-called causal relationships derived from the “mutual interactions” among the constituent parts, along with their internal connectivity which reflects the “structural features” of the actual arrangement of the material stuff of the system. Causal relationships yield patterns of causal networks. These networks, under some constraints, arrange into higher-level patterns known as levels of organization, which consist of hierarchical divisions of stuff (not necessarily only material stuff) organized by part/whole relationships. This constitutes a central concept for the present issue.

The formation of the first starts, the nucleosynthesis which made the heavier-than-hydrogen nuclei, the coalescence of electrons from the plasma into the vicinity of these nuclei, liberating the photons to make the light and releasing the Cosmic Microwave Background Radiation, and yielding the atoms which subsequently bound to each other to make molecules, and so on, yield specific levels of organization each having a radically distinct organization of matter, increasing in complexity and bearing their own rules and causal relationships.

The notion that at (sufficiently) complex levels of organization, under certain constraints, novel properties (and/or substances) may arise out from the mutual interactions of the constituents’ parts of the lower level of organization and yet the former are irreducible to the latter is referred to as Emergence[3].

For instance, the electrons and nuclei of a metallic crystal are organized by its electronic, fermionic band structure with the one-electron-per-state (spin-orbital) rule which dictates the conductive (or insulating) properties of the electric conducting (or insulating) state. At a higher level of organization, under special constraints, the nuclear vibrations (phonons) can interact with the (valence) electrons resulting them arranged in the so-called Cooper pairs. Cooper pairs behave as bosons (resulting from the singlet-spin pairing of two fermions) and can coalesce, at sufficiently low temperatures (the constraint), into a single-for-all robust and coherent Bose-Einstein condensate, which can conduct electricity without resistance giving rise to the superconducting state. Thus, we see that the interactions between the constituent parts of the lower level of organization, electrons and nuclear phonons, yield, under certain constraints, an entirely new and unexpected kind of matter (bosons “made” from the fermions) with the subsequent emergence of an unexpected property, superconductivity, at the higher level of organization.

Emergentism claims that the world is entirely made of a layered strata of levels of organization, physical structures which may be either simple or complex. But, the complex ones are not mere aggregates of the simpler ones. Each layer (level of organization) hosts the appearance of “novel qualities”, arising from but not present in the lower layers. And even though, one may think that lower level properties constitute primitive causal powers, it is at each higher level of organization, not below, where the laws connecting its emergent physical structures and properties should be looked for. Emergent laws are simply not present at the lower level of organization.

In order to put Emergentism in its proper perspective let us briefly comment on its “antagonist” methodology for analyzing the world, the Reductionism. Here the explanatory strategy consists of breaking down complex physical systems to the point where no further breaking is possible. Such indivisible parts should, by assumption, exhibit less complicated properties and thereby will likely reduce collective complex behavior to combinations of simpler smallest units.

Leibniz’s calculus[4], a landmark advancement in mathematics, illustrates well these ideas, for he presented a procedure to reduce complex processes down to their infinitely small, infinite “simple” components, i.e.: the “infinitesimals”; so small that are devoid of any complexity. According to Leibniz all complex processes can be chopped down into a series of infinitely small, infinite simple tractable events. Then, the overall process is simply described by properly adding up the outcomes of these events.

The promise of Reductionism is that the (re)construction of complex collective behavior of physical systems starting from the properties of their smallest components is doable. Namely, Reductionism advocates for the quest of a discrete world and of explanations framed at the lowest-scale size from which complexity arises from simple operations repeated a myriad of times. All what remains to do is then to find out the algorithm underneath these operations.[5]

This is the dream of reductionists, the idea that there should exist a unique all-embracing ultimate fundamental theory, where all nowadays existing theories should converge to and merge in it. The earlier successes of reductionism in the analysis of phenomena (not all phenomena) susceptible of decomposition, have given the false impression that investigating the interactions of the lower-level components is more informative than investigating the organizational features of these components at the higher-level. If the lowest, most fundamental level of organization can be found and the properties of its parts understood, theoretically described and codified in precise laws, then starting from these informations one should, by simply applying legitimate inferences, be able to find all possible higher levels of organization and fully describe their intrinsic properties. After all, is it not the most fundamental goal of science to give an intelligible theoretical description of “disparate” phenomena by accounting for them all on the basis of the “primogenial” principles assisted by logically correct inference rules ? Namely, to find the explanation for the world and for all it contains.[6]

It sounds like a commendable aim, but it faces a number criticisms that should be given serious consideration. We enumerate some below.

First, it is well documented the existence of observable and measurable phenomena at the macroscopic (high) level which are insensitive to the finer microscopic (lower) level details, i.e.: they are robust at their level of organization. These properties are well understood and have been successfully codified by means of high-level laws, which have been found to be irreducible to the more fundamental laws of the lower level. The existence of such properties is utterly relevant for they pose a challenge to the usefulness (perhaps uselessness is more appropriate) of the tentative unique all-embracing ultimate fundamental theory, because it shows that for at least some fundamental facts of nature, the all-embracing ultimate fundamental theory is irrelevant.[7]

Second, higher levels of organization have always much longer response times than the lower levels. Consider biological time scales: biochemical ∼1 second; metabolic ∼1 minute; epigenetic ∼1‒10 hours; developmental ∼days‒years; evolutionary ∼103‒106 years. Thus, since reducing biology to quantum mechanics requires solutions of its Hamiltonian with an energy accuracy ∆E∼ℏ/∆t, where ℏ=1.054×10‒34 Joule×second is the reduced Planck constant, it turns out that even for the shortest response-time biological processes we are requiring an energy accuracy of ∼10‒34 Joule. This is at least ten orders of magnitude more accuracy than what the currents measurements of electron dynamical processes could offer.

Third, theories are expressed in terms of consistent mathematical logical systems. Now, Gödel’s second incompleteness theorem proves that for such mathematical systems there are always “truths” that cannot be demonstrated within the system by its own axioms and rules of inference[8]. They are inevitably incomplete for there always be “truths” in the system that require methods of proof and points of view that transcend the system itself. The direct implication is that all theories formulated in terms of consistent mathematical systems are infinite in the sense that new discoveries (the “truths” mentioned above) will always be possible, so they will come sooner or later, leaving the existence of the unique all-embracing ultimate fundamental theory in a delicate position, to say the least.

Fourth, the process of theory reduction, whichever it is, assumes that the set theories being reduced can always be totally ordered.[9] That is true for classical theories presupposing a Boolean propositional logic, but it is not for quantum theories, for instance. The latter, thus, constitutes a set of theories which cannot be totally ordered, and consequently, cannot be reduced.[10]

On the other hand, Emergentism is customarilly defined in terms that oppose to bottom-up causal relationships between the levels of organization, because of the fundamental discontinuity of the physical laws among the various levels of organization of the physical systems.[11] Thus, the world is seen as stratified in a number of distinct levels of organization, with a strong hierarchical rule for the decomposition of the higher- into lower-level ones. Namely, “decomposition” is allowed but “reconstruction” is (often) forbidden. Furthermore, Emergentism claims that it is in principle impossible to derive the higher-order behavior even from complete knowledge of the lower-level behavior. In order words, only after having had observed the emergence of a higher-level emergent property, we realize that even having had a complete knowledge of all properties of the lower-level components along with their mutual interactions, one could never have predicted[12] all properties of the higher-level organization.

This conceptualization of Emergence, though, has received substantial criticism. Perhaps, the most acute objection, formulated by Kim, states that emergence could equally well be described as epiphenomenal, i.e.: secondary phenomena seen at the higher level concurrent with the setting of the lower level “necessary conditions”, but not necessarily having caused by them.[13] The full formulation is subtle and knotty, but can be (over)simplified to something like this. Assume (i) no magic, (ii) that all composite systems are made of simpler parts, and (iii) that these parts plus their causal powers (i.e.: physical properties) constitute the substrate of any physical interaction. Then, all properties of higher order organizations (make of these same parts and nothing more, no magic !) must ultimately be realized by these more basic interactions among the parts. This objection has a close connection with the concept of supervenience: the “relationship” that emergent properties have to the base properties that give rise to them. The “relationship” implies that there cannot be emergent properties without a change of the physical substrate properties, a change that can only be produced by the emergent properties themselves, and consequently leads to a circular erroneous argument with properties of the parts explaining the properties of the composite which explain the properties of the parts, and calls for a clarification of the part/whole (composite system) issue.

Humphreys has provided one,[14] not the only one. He argues that parts should and are changed by virtue being engaged in interactions with each other when included in larger composite entities. Thus, what were once independently identifiable parts cease to exist anymore, breaking the circularity mentioned above so that we get emergence back into sound conceptual ground.[15]

Two additional remarkable advancements made recently are also worth mentioning. The first and perhaps the most influential advancement made in the field is the inclusion of the dynamic perspective for the study of distinctive and discontinuous changes in aggregate behavior triggered by lower level dynamics. Specifically, phenomena like the growth of snow crystals (fractality), or the transition to the superconductivity state at low temperatures, provide a set of so-called self-organization processes[16] that concur with the general criteria of emergence phenomena: high-level organization properties emerging from parts lacking such properties.

The analytical solution of the equations of motion for these processes is impossible, but they can nevertheless be modeled as step-by-step iterative simulations. Such simulations which proceed to iterate millions of times simple algorithms often yield surprisingly complex behavior that could not even be imagined by the analytical solutions. The concurrent development of appropriate computational methods has enabled the advancement of sophisticated graphical processing techniques for visualizing these highly non-linear dynamical processes “in action” and, more importantly, has provided the means to create “model” systems with full control over the inter-part interaction parameters.

This has paved a new way to think about emergence. It involves algorithms that iterate simple local operations on each of the multiple cells arranged in large arrays, a construction known as the cellular automata. Computation demonstrates that often complex dynamical regular patterns do spontaneously show up.[17] It is this logic of generating complex patterns out of simple iterated operations that has became a new paradigm of emergence. Even more, the analogy of this logic with that of the many natural processes that involve local interactions has spawned claims that it could offer a better frame to understand complex phenomena in general.[18]

In this vein, the exploration of non-equilibrium dynamic systems has revealed interesting behaviors. Probably the best well-known case is that of the “attractors”, discovered by Lorenz[19] in 1963 while studying the computational modeling of fluid flow behavior in the atmosphere. He was interested in modeling the way air moves when heated from below and cooled from above.

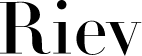

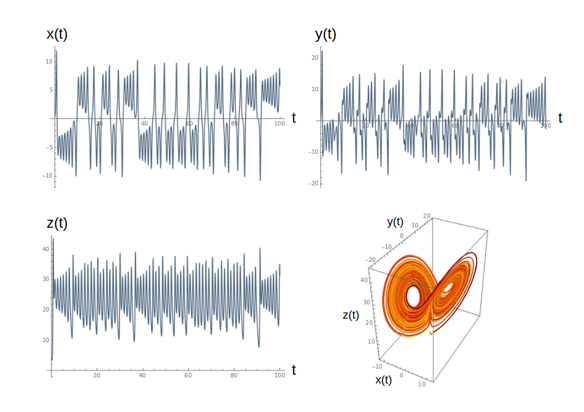

The model describes[20] how three key properties of this system, x: the rate of air flow, y: the temperature difference between the ascending and descending air columns, and z: the distortion from linearity of the vertical temperature profile. The calculations reveal a pseudo-chaotic behavior for all three properties over time, but the parametric-plot trajectory shows that it remains confined within a finite domain and traces out an orderly twisted butterfly like pattern[21], as seen in Figure 1.

Figure 1. Plots of the x(t), y(t) and z(t) functions and the parametric t-plot of the solutions of the three coupled differential equations-of-motion: dx(t)/dt=a[x(t)‒y(t)]; dy(t)/dt=‒x(t)z(t)+bx(t)‒y(t); dz(t)/dt=x(t)y(t)‒z(t), with a=3.0, b=26.5, and the initial conditions, x(0)=0.4, y(0)=0.1, z(0)=4.1. Properties x, y and z and time, t, in arbritary units.

This (Lorenz) attractor is the most widely cited exemplary of what has come to be known as deterministic chaos, which nowadays is part of the more general complexity theory.[22] These methods provide well-defined physical and mathematical examples of processes that generate complex regular patterns from unorganized and often randomly distributed interactions among their parts. In many respects they can be legitimately categorized as emergent phenomena because the generated regular higher level patterns resulting from otherwise unorganized dynamic interactions at the lower level, are no mere summations of lower-level properties, and exhibit characteristics that have no lower-level counterparts. Furthermore, it seem highly likely that the “self-organization”, which can arise unbidden in systems fed with either energy, as above, or matter, as in the Brusselator[23], (see Appendix), that prevents them from achieving a static equilibrium may have a part to play in life’s orderliness, though it must be kept in mind that life is more that the emergence of “wavy properties” over time.

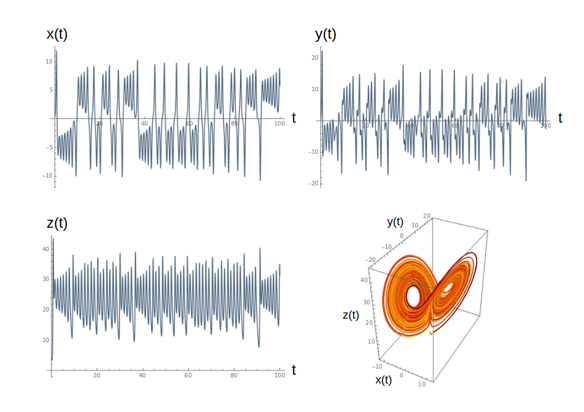

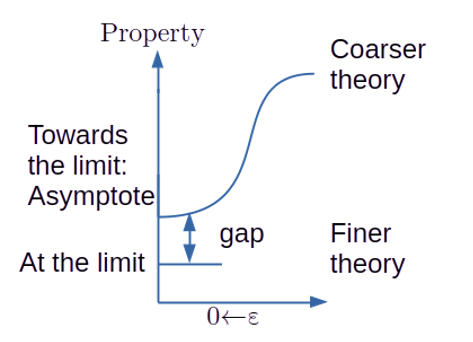

The second refers to the so-called asymptotic reasoning procedure[24]. In short, there are theories coupled by taking the limit of some critical parameter. Namely, Take Limit(ε→0)Tf = Tc, where Tf stands for a finer (more fundamental) theory and Tc for a coarser theory. When the limit-taking operation is “regular,” it represents a case of theory reduction, Tf is reduced to Tc at ε=0, but when it is “singular”, one can talk only about “inter-theoretic relations”. Exemplars of the former include Quantum Mechanics (finer) and Classical Mechanics (coarser), for ε=ℏ, and Special relativity (finer) and Newtonian Mechanics (coarser) for ε=(𝑣/c) with “𝑣” being the velocity of bodies that are moving slowly compared to the speed of light “c”; and of the latter, Schödinger Quantum Mechanics (finer) and Molecular Electronic Structure Theory (coarser) for ε=𝑚/M, with “𝑚” being the mass of the electron and “M” of the proton.

Notice the essential role of the singular nature of the limiting behavior of some properties which may change abruptly at the limit, because they show a limiting behavior radically at variance to the one exhibited in the limit. Notice also, that this does not require different levels of physical organization, all takes place at one single level. This is why it is occasionally referred to as epistemological emergence.

The asymptotic reasoning (AR) has spurred some vivid debate about the explanatory values of theories.[25] Thus, in accordance to AR, the finer theory cannot account for an explanation of the coarse regime properties possessing a singular behavior at the ε→0 limit, as pictorially illustrated in Figure 2. One clearly needs to bridge the gap in order to extend the explanatory adequacy of the finer theory over the coarser regime.

Figure 2. Limiting process of a “Property” which depends on parameter, ε, when it is taken to zero.

The critics challenge the ascribed explanatory inadequacy to the finer theory by claiming that it should contain, at least in an approximate sense, the elements of physical interpretation of the coarser theory. Hence, one can replace the elements of physical interpretation of the coarser theory by those of the finer and, if such interpretation provides understanding then the finer theory is perfectly explanatory adequate.[26] The discussion, however, has come (apparently) to an end[27] by pointing out that such replacements are not necessarily conductive to “understanding”. The alternative provided by AR consists of assimilating key aspects of the coarser theory into the finer theory to build the gap’s bridge at the asymptote domain.

The case of the electronic structure theory is paradigmatic in this respect. By including classically behaving nuclei into the quantum Schrödinger equation renders an adequate explanation of the chemical bond[28], and furthermore open avenues for new concepts like adiabatic and non-adiabatic molecular quantum states which in turn provide adequate explanations for many phenomena associated with the dynamics of the chemical bonds.

Following these developments, the concept of emergence has spread to many fields, both in natural and social sciences alike.[29] It is regularly applied whenever spontaneous generation of regular and stable robust patterns arise from the concerted unorganized interactions of component parts, and whenever singular behavior of the limiting relationship between finer and coarser theories turns out to be relevant to the phenomena of interest, in such a way that it prevents theory reduction and prompts emergence of key bridging features at the limiting asymptotic region.

Without trying to be exhaustive, let us comment briefly on the specific manners in which emergency enters in a number of social sciences disciplines.

Thus, admitting that human behavior is not predictable as it is physical phenomena, and that Economy is deprived of “universal” empirical regularities, the well documented existence of so called “stylized facts”, i.e.: common patterns observed across different economic data sets, lends support to the usage of the theory of complex systems in order to construct global economy models.[30] In particular, financial crisis could be associated with “critical points”, and one can speak about the “turbulence” exhibited by financial data. Consequently, emergence pops out as an efficient explanatory element. However, due caution must be exercised for such adequacy suffices to justify a “formal” analogy between economic systems and complex systems’ behavior, but in any case can one justify a “material” analogy. Formal analogy serves descriptive purposes and entails (some) predictive power. But it lacks explanatory power, in a causal sense, because it does not inform about what causal relation makes up the fabric of the system of interest. Hence, it prevents knowing which interventions could be effective towards achieving a given goal. To this end, a material analogy between the economic reality and the complex system’s tenets, must be constructed alongside the formal one. This requires additional “idealizations” of the economic system. Some models are prone to such idealizations, others are not.[31]

However, other social sciences disciplines, have recently opted for examining emergent phenomena without grounding in complexity theory. Law studies constitute a canonical exemplar of this move. Thus, in the recent essay by Tamanaha[32], five additional emergent traits are identified in order to rationalize the transition from simple rules of social intercourse (relations among individuals) to the more complex and holistic rule-of-law societies[33]. All five Tamanaha’s traits pose tough challenges to the configuration of law, and portrait rule-of-law societies as emergent wholes whose existence requires profound social transformations. Such transformations involve the rise of social organizations, and come concomitant with the setting of legally regulated societies. However, its emergent nature confers a distinctive differential status to the rule of low society. Legally regulated societies can exist alongside an authoritarian policy that does not operate constrained to legal regulations.[34]

Consideration of emergence theory has been found suitable to account for and put into proper perspective some of the legal challenges posed recently by the implementation of advanced machine learning powered artificial intelligence (AI) using technologies, which basically belong to the private law domain[35]. In particular, two legal aspects have been considered in this respect, (i) the attribution problem and (ii) the intellectual property law problem.

Consider the first case first. The autonomy of AI systems stems from their ability to learn and act accordingly in circumstances not foreseen by their creators. This can clearly be seen as an emergent property. For that reason, attributing liability under such instances may lead to the fallacy of division, consisting in attributing properties of the whole to its parts. Notice that in tort law this scenario could result in a “victim without perpetrator” case, and lead to deny compensation in a situation involving AI technologies, whereas there would certainly be a compensation in a functionally equivalent case involving conventional technologies. The surge of AI using technologies obscures establishing the “proof of causation”.

For the latter case, imagine a telescope coupled to an AI assistant program which instructs autonomously the telescope where to aim at. Now, imagine that it discovers an unknown planet, and subsequently by analyzing the received spectral light probes its “atmosphere”, which yields sound evidences for the presence of living organisms on that planet. Then, the AI assistant calls ChatGPT to write a manuscript which submitted for publication. After going through the usually demanding peer-review procedure, the manuscript is accepted and published. Stretch your imagination a bit further to imagine that such research is awarded the Nobel Prize. Now ask yourself, to whom will be presented the Prize in Stockholm ? Cases like this, perhaps less dramatic, are or will surely be ubiquitous in creative and/or innovative arts, for example. The problem of ascribing authorship and intellectual property arises from the fact that the operation of the AI programs cannot be traced down directly to a single identifiable “human source”, which clashes with the current intellectual property law’s fixation on human actors as the center of all creativity.

The discussion based of emergent properties merges the law and technology approaches, and as such can help identify the circumstances under which technological changes give rise to legal problems. In particular, it may explain how the aggregation of a number of perfectly compliant acts may result in a whole which is not compliant with the law. This emphasizes the need for recognizing emergence as an important asset to put in proper perspective complex law problems.

In sociology, emergence theory has been mainly engaged with the so-called “Collectivist theory” of social phenomena, and has recently spurred great interest as a promising response to the so-called “Methodological Individualistic” approach with contends that the micro-to-macro emergence of social phenomena commences from the individuals’ actions, so that the explanation of emergent social behavior(s) should not be seen as incompatible with reduction to individual level explanation.

Contemporary sociology theorists, though, draw explicitly on emergence which within this context, is referred to as the “Collective view of social behavior emergence”. Nowadays, at least three distinct flavors can be distinguished. Namely, firstly, Blau’s structuralist view [36] maintains that the major terms of macro-sociological analysis refer to emergent properties of population structures that have no equivalent in micro-sociological prospection. Secondly, Bhaskar’s transcendental realism[37] holds that social reality is stratified, and if ontologically dependent on it supervenient individuals’ actors it is irreducible to them, and even it is autonomous from them. Bhashar maps 1:1 emergentism and realism, meant that realistic explanations correspond to genuinely emergent phenomena, and that emergent phenomena require realistic explanations. Thirdly, Archer in her morphogenetic dualism approach[38] argues that social behavior emerges from individual behavior which are prior to the emergent behavior. Once such social behavior has emerged, it becomes autonomous of its emergence base, and such autonomy entails independent causal influences in its own right. It is the emergence “over time” (morphogenesis) that makes emergent structures real and allow them to constrain individuals via downward causation.

All three approaches have been thoroughly revised and some of their deficiencies and shortnesses, such as lack of clearness in account is downward causation mechanisms, or supervenience relation between societal and individual levels, have been recently highlighted.[39]

Ugalde, Jesus M.

Echeverría, Javier

Zabala, Jon Mikel

Editors in Chief

3. Summary

The ubiquitous reductionism approach that has plagued (almost) all areas of all sciences, fueled by the utilitarian character of some contemporary research programs, has brought in too much emphasis on reporting “unrelated” observations, and stripping off the bigger picture view, consisting in integrating the observations into newly emergent patterns.[40] In this vein, Emergentism has lately made its (re)debut and helped to lift up the tight constrained views of the reductionistic approach and to open sights to a broader and richer domain for the scientific endeavor. After all, “unrelated” knowledge is confusing and utterly useless, to say the least. The bigger picture matters.

Emergentism appears in various diverse disciplines.[41] Despite the slight differences of meaning among them, the concept of emergence is agreed to refer to the arising of robust, novel properties and patterns due to some singular ways of organizing the parts of the system into the whole. The process of organization needs to generate complexity at a higher-level, from which the new properties emerge by averaging out irrelevant details and bolstering the essence of the robust new property or pattern. Emergent phenomena share a number of features[42] that identify them as emergent: (i) Radical Novelty, (ii) Robustness and Coherency, (iii) Complexity at the higher levels of organization, (iv) Ostensiveness: emergents are “ostensively” recognized by showing themselves. This sets a common ground for natural and social sciences alike which enables direct interdisciplinarity to be exercised in a natural way.

All in all, this issue collects a number of selected scholar contributions aimed (i) at illustrating how emergent phenomena is projected out from a number of diverse disciplines, and (ii) at showing that once stripped off from subjective impressions, serendipitous novelty,[43] or merely epiphenomenal concurrence, emergence studies deserve to be push beyond any sort of well-trodden metaphorical value into the realm of rigorous scholar investigation.

Appendix

The Brusselator is a canonical example of a chemical oscillator, a class of complex systems exhibiting fundamental differences with respect to the more familiar mechanical oscillators. When a chemical reaction oscillates never goes through its equilibrium state. Indeed chemical oscillation phenomena appears at far-from-equilibrium conditions. In this vein, Prigogine et al., at Brussels, pointed out that open systems, i.e.: systems that can exchange either matter or energy with their environments, when kept far from equilibrium could also get self-organized by dissipating energy or matter to compensate the entropy reduction in the system, and so sustain oscillations mediated by a continuous exchange of matter/energy with the environment. The spatial or temporal structures that arise in this way are called dissipative structures.

The Brusselator consists of an open chemical system undergoing the (i)‒(iv) reactions, annotated below:

(i) A→X

(ii) 2X+Y→3X

(iii) B+X→Y+D

(iv) X→E

The overall reaction is A+B→D+E, and the concentrations of all these four species are kept constant, A and B are supplied and D and E are removed from the reactive domain. The concentrations of the intermediate species X and Y are allowed to vary in time. Consequently, the rate equations (equations-of-motion) for species X and Y are:

(v) dx(t)/dt=‒a+x(t)2y(t)‒b x(t)‒x(t)

(vi) dy(t)/dt=b x(t)‒x(t)2y(t)

Notice that we have set all rate constants ki=1, (i=i‒iv), for convenience.

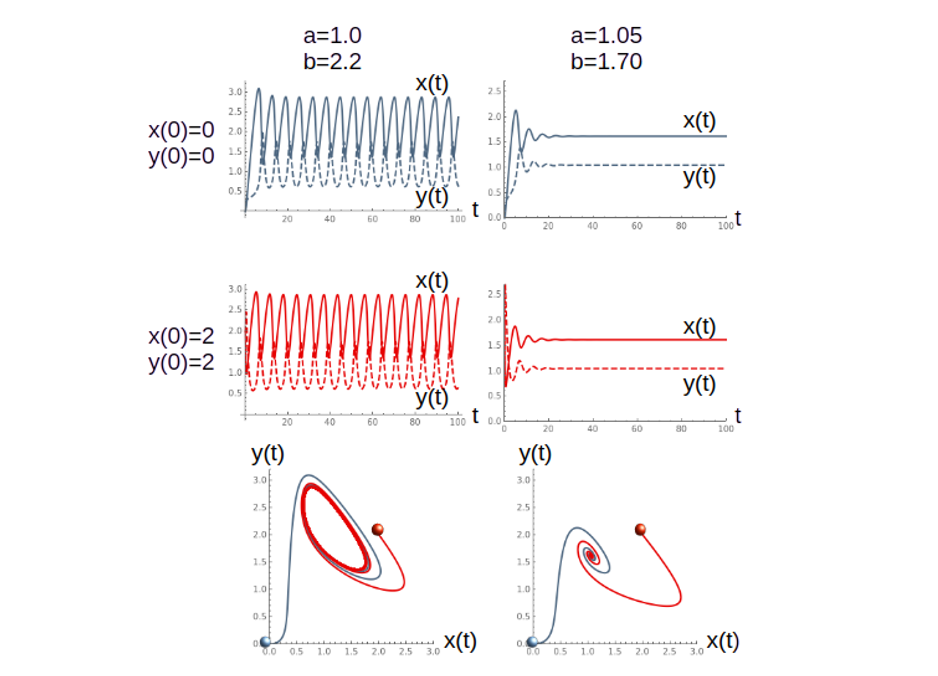

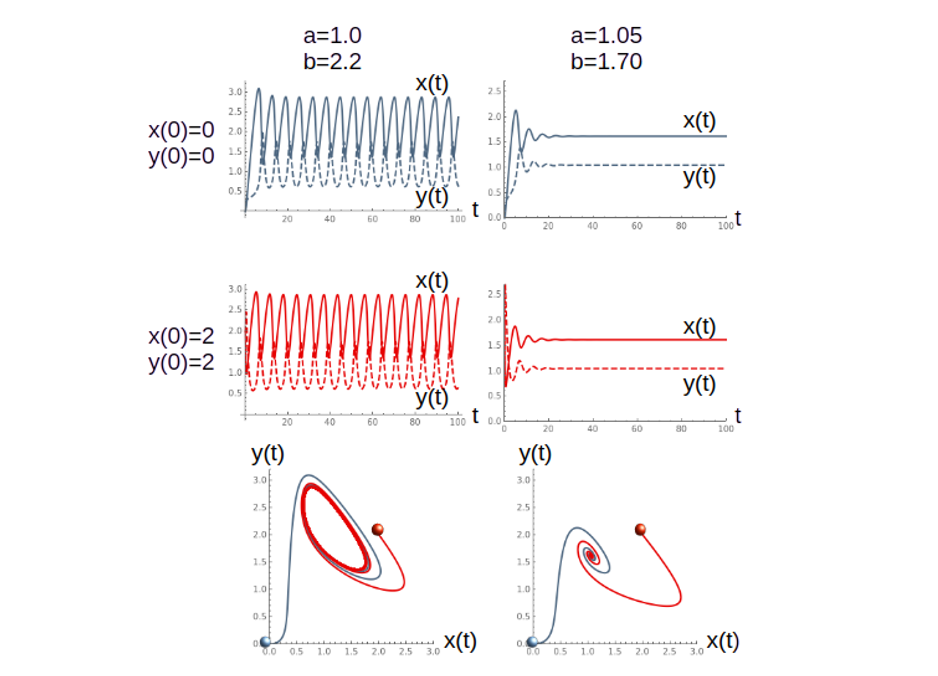

The solution of the coupled rate equations (v)-(vi) with, two sets of steady concentrations for species A and B, and two initial concentrations for X and Y are shown in Figure A1. It is observed that the concentrations x(t) and y(t) of the X and Y species, respectively, behave differently with respect to the steady fixed concentrations of A and B, irrespective of their initial concentrations, x(0), y(0). Thus, when a=1.0, b=2.2, both X and Y concentrations, after in initial jump, acquire an oscillatory behavior with equal frequency and intensity for both x(0)=y(0)=0 and x(0)=y(0)=2, as shown in the top two panels of the left hand side of Figure A1. Similarly, when a=1.05, b=1.70, after the initial jump, the concentrations of X and Y behave identically irrespective of the initial concentrations x(0)=y(0)=0 and x(0)=y(0)=2, as shown in the top two panels of the right hand side of Figure A1. However, the behavior is radically different, while the former features a sustained oscillations, the latter features a flat constant concentration over time.

The bottom panels of Figure A1 shown the corresponding t-parametric plots of y(t) vs. x(t). Thus, the bottom left panel shows y(t) vs. x(t) for a=1.0, b=2.2 and both x(0)=y(0)=0 (blue curve) and x(0)=y(0)=2 (red curve). Note that despite they begin at very different points (highlighted by their corresponding dots) both trajectories end up in the so-called limit circle around the attractor which is located at the (x=a, y=b/a) point.

Conversely, for the steady concentrations a=1.05, b=1.70 both trajectories fall onto the attractor, irrespective of their initial point, as shown in the bottom right panel.

Figure A1: Plots of the x(t) and y(t) functions solution of the coupled equations-of-motion (v)-(vi), top four panels, for selected steady concentrations a and b of species A and B, and initial concentrations x(0), y(0). The t-parametric plots of the corresponding trajectories, bottom two panels. The initial points are highlighted by the dots. Concentrations and time in arbitrary units.

The criticality of this behavior is established at bc=1+a2. Thus, for b>bc, the system presents an oscillatory pattern for the concentrations of both X and Y, species over time, while for b

When spatial diffusion is taken into account, the modified equations-of-motions can be expressed as:

(vii) dx(r,t)/dt=‒a+x(r,t)2 y(r,t)‒b x(t)‒x(t)+𝒟x (∂2x(r,t)/∂r2)

(viii) dy(r,t)/dt=b x(r,t)‒x(t)2 y(r,t)+𝒟y (∂2y(r,t)/∂r2)

where the 𝒟’s stand for the Fick’s diffusion coefficients, and r for the spatial coordinate. The solutions, x(r,t), y(r,t), of the coupled differential equations (vii)-(viii) reveal that in addition to the limit cycle, non-uniform steady states may also appear. Turing was the first to draw attention to these kind of states in chemical reactive open systems in his seminal paper about morphogenesis, where the limit cycle becomes also space dependent and leads to the so-called chemical waves.

[1]Adam Smith, “The Wealth of Nations (1776)”, p. 423, Modern Library, New York (2004).

[2]W. C. Wimsatt, “The Ontology of Complex Systems. Levels of Organization, Perspectives, and Causal Thickets”. Can. J. Philo. Suppl. Vol. 20, 207‒274 (1994). doi:10/1080/00455091.1994.10717400.

[3]M. Bunge; “Emergence and Convergence. Qualitative Novelty and the Unity of Knowledge”, Toronto University Press, Toronto, Canada (2014).

[4]Leibniz published his calculus in 1684. See: J. Echeverría, “Leibniz, el archifilósofo”, Plaza y Valdés, Madrid (2023). ISBN:978-84-17121-72-3

[5]D. Chandler, “Is the Universe a giant(quantum) computer?”, Nature, 620, 943‒045 (2023)

[6]S. Hawking in his “Brief History of Time” (Bantam Press, London, UK, 1998) wrote: “… I still believe there are grounds for cautious optimism that we may now be near the end of the search for the ultimate laws of nature”. Chapter 11.

[7]R. B. Laughlin and D. Pines; “The Theory of Everything”, Proc. Natl. Acad. Sci., 97, 28–21 (2000)

[8]K. Gödel; “Some Basic Theorems on the Foundations of Mathematics and their Implications”, Collected Works. Vol. II. Unpublished Essays and Lectures. S. Feferman et al., eds. Pages 304–323. Oxford University Press, New York (1995)

[9]H. Primas; “Chemistry, Quantum Mechanics and Reductionism”, Lecture Notes in Chemistry, Vol. 24, Springer-Verlag, Berlin (1983).

[10]H. Primas; “Theory Reduction of Non-Boolean Theories”, J. Math. Biology, 4, 281–302 (1977)

[11]J. A. de Azcárraga; “Reduccionismo y Emergencia, de nuevo”, Rev. Esp. Fis., 38 29–36 (2024)

[12]Notice that within this context “predict” stands for “not being any physical procedure for the determination of the higher- from the lower-level”.

[13]J. Kim, “Making Sense of Emergence”, Phil. Stud., 95, 3–36 (1999)

[14]P. Humphreys, (a) “How Properties Emerge”, Philosophy of Science, 64, 1–17 (1997). doi:10.1086/392533. (b) “Emergence not Supervenience”, Philosophy of Science, 64, S337–S345 (1997). doi:10.1086/392612.

[15]P. A. Corning, “The Re-Emergence of Emergence: A Venerable Concept in Search of a Theory”, Complexity, 7, 18–30 (2002)

[16]F. Eugene Yates, A. Garfinkel, D. O. Walter, G. B. Yates (eds.) “Self-Organizing Systems: The Emergence of Order”, Springer, New York (2012)

[17]P. Larrañaga, V. P. Soloviev. “Elements of Complex Networks”, Rev. Int. Estud. Vascos, 68, 2 (2023).

[18]S. Wolfram, “Twenty Years of a New Kind of Science”, Wolfram Media, Inc., (2023)

[19]Lorenz, E.N. (1963), Deterministic Nonperiodic Flow. Journal of the Atmospheric Sciences, 20(2), 130–141.

[20]See the caption of Figure 1 for the detailed equations-of-motion.

[21]This brings to the fore the importance of modern graphical processing techniques for properly visualizing these highly non-linear dynamical processes “in action”.

[22]S. Thurner, R. Hanel, P. Klimek, “Introduction to Theory of Complex Systems”, Oxford University Press, New York (2016).

[23]G. Nicolis and I. Prigogine, “Self-organization in nonequilibrium systems: From dissipative structures to order through fluctuations”. Wiley, New York (1977). See also: A. M. Turing “The Chemical Basis of Morphogenesis”, Phil. Trans. Roy. Soc. Lond., B237, 37 (1952), for an in-depth discussion of the additional effect of spatial diffusion for the emergence of morphogenesis.

[24]R. W. Batterman, The Devil in the Details”, Oxford University Press, New York (2002)

[25]M. Redhead, “ Discussion Note: Asymptotic Reasoning”, Studies in History and Philosophy of Modern Science, 35(B), 527–530 (2004)

[26]G. Belot, “ Whose Devil ? Which Details ? ”, Phil. Sci., 72, 128–153 (2005)

[27]R. W. Batterman, “Response to Belot’s “ Whose Devil ? Which Details ? ””, Phil. Sci., 72, 154–163 (2005)

[28]G. Frenking and S. Shaik, “The Chemical Bond”, Vol I, II; Weinheim, Germany (2014)

[29]O. Artime, M. De Domenico, “From the Origin of Life to Pandemics: Emergent Phenomena in Complex Systems”, Phil. Trans. R. Soc. A 380, 20200410 (2022).

[30]T. Cooley, ed. “Frontiers of Business Cycle Research”. Princeton University Press, (1995).

[31]P. Palacios, J. S. Jhun, “Statistical Mechanical Models of Finance”, in The Routledge Handbook of Philosophy of Scientific Modeling, London (2024).

[32]B. Tamanaha, “Law’s Evolving Emergent Phenomena: From Rules of Social Intercourse to Rule of Law Society”, Washington Univ. Law Rev., 95, 1149–1186 (2018).

[33]Stripped of all technicalities, this refers to a governance whose actions are bound by rules fixed and announced beforehand. Such rules should make it possible to foresee with fair certainty how the authority will exercise its coercive powers in all given circumstances, so that it enables individuals to plan their actions based on this knowledge. See: L. Fuller, ”The Morality of Law”; p. 38–39, Yale University Press, USA (1969).

[34]R. Geuss, “History and Illusion in Politics”, Cambridge Univ. Press, London (2010).

[35]S. Esayas, ”The Important Role of Emergence in Conceptualizing the Challenges of New Technologies to Private Law”, Eur. Private Law Rev. 31, 779–822 (2023).

[36]P. M. Blau and R. K. Merton, “Microprocesses and Macrostructure”, p. 83–100, in “Social Exchage Theory”, K. S. Cook , Ed., Sage, Newbury Park, USA (1987).

[37]R. Bhaskar, “Emergence, Explanation and Emancipation”, p. 275–310, in “Explaining Human Behavior”, P. F. Secord, Ed., Sage, Beverly Hills, USA (1982).

[38]M. S. Archer, “Realistic Social Theory: The Morphogenetic Approach”, Cambridge Univ. Press, New York, USA (1995).

[39]R. Keith Sawyer, “Emergence in Sociology: Contemporary Philosophy of Mind and Some Implications for Sociological Theory”, Am. J. Sociology, 107, 551–585 (2001)

[40]“There are rushing waves … mountains of molecules, each stupidly minding its own business … trillions apart … yet forming white surf in unison”. Quoted from: R. P. Feynman; “The Value of Science”, Engineering and Science, 19, 13–15 (1955).

[41]E. Onnis; “Emergence: A Pluralistic Approach”, Theoria, 38, 339–355 (2023). doi:10.1387/theoria.23972.

[42]J. Goldstein; “Emergence as a Construct: History and Issues”, Emergence: Complexity & Organization, 1, 49–72 (1999). doi:10.1207/s15327000em0101_4.

[43]J. Holland; “Emergence: From Chaos to Order”, Addison-Wesley,Reading, MA, USA (1998).